Enhancing Trading Chart Performance with WebAssembly in Deriv Apps

WebAssembly in Deriv: Trading charts

Charts are among the key components of trading applications and are used in multiple Deriv apps, namely DTrader and DBot.

Our main objective going forward is to build a chart library with high performance and smooth rendering. We are currently considering using CanvasKit in our charts to push the boundaries of performance.

CanvasKit is a WebAssembly module that draws onto canvas elements with Skia, providing a more advanced feature set than the Canvas Web API.

We are also planning to use WebAssembly to calculate the position of the markers in the chart. Markers are added to the charts to indicate the time of purchase and the price level at which the user has purchased a contract. The position of the markers needs to be recalculated as the chart is zoomed in and out.

We will use WebAssembly to compute the position of the markers and CanvasKit to plot the markers on the chart to make the rendering quick and fluid.

We successfully developed a proof-of-concept for our current trading charts using WebAssembly. In this post, we will share our learnings and a few best practices about WebAssembly that we gained while working on the proof-of-concept.

What is WebAssembly?

WebAssembly, abbreviated as Wasm, is a technology that makes it possible to run high-performing, low-level code in browsers. Simply put, Wasm is a programming language format (Abstract Syntax Tree) and execution environment (portable stack machine) that can be a compilation target for other programming languages. It can be embedded into a web browser to provide a common way to support other languages as an alternative to JavaScript.

WebAssembly Core Specification was named an official web standard on 5 December 2019 by the World Wide Web Consortium (W3C).

Why WebAssembly?

WebAssembly offers multiple advantages. It is a low-level assembly-like language with a compact binary format that runs with near-native performance. It provides a compilation target so languages with low-level memory models such as C++ and Rust can run on the web.

WebAssembly seeks to be hardware-independent, language-independent, and platform-independent. As such, it may target all modern architectures, desktop, or mobile devices as well as embedded systems. WebAssembly programs may be embedded in browsers, run as a stand-alone VM, or integrated into other environments.

You can find all languages and tools that work with WebAssembly here. Notable languages are C/C++, C#/.NET, Rust, Java, Python, Elixir, and Go.

The design of WebAssembly promotes safe programs by eliminating dangerous features from its execution semantics while maintaining compatibility with programs written for C/C++.

Modules must declare all accessible functions and their associated types at load time, even when dynamic linking is used.

Security

The security model of WebAssembly has two important goals:

- Protecting users from buggy or malicious modules

- Providing developers with useful primitives and mitigations for developing safe applications, within the constraints of the first point above

Security for users

Each WebAssembly module executes within a sandboxed environment separated from the host runtime using fault isolation techniques. What does this mean for the user?

- Applications execute independently and can't escape the sandbox without going through appropriate APIs.

- Applications generally execute deterministically with limited exceptions.

Additionally, each module is subject to the security policies of its embedding. Within a web browser, this includes restrictions on information flow through the same-origin-policy. On a non-web platform, this could include the POSIX security model.

Security for developers

WebAssembly's design promotes safe programs by eliminating dangerous features from its execution semantics while maintaining compatibility with programs written for C/C++.

Modules must declare all accessible functions and their associated types at load time even when dynamic linking is used. This allows implicit enforcement of control-flow integrity

(CFI) through structured control flow. Since compiled code is immutable and not observable at runtime, WebAssembly programs are protected from control flow hijacking attacks.

Streamability

The WebAssembly.instantiateStreaming() function compiles and instantiates a WebAssembly module directly from a streamed underlying source. This is the most efficient, optimized way to load Wasm code.

Writing WebAssembly code

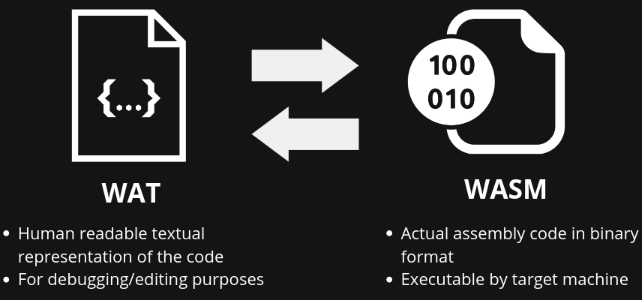

WebAssembly has a binary format and a text format. The binary format (.wasm) is a compact binary instruction format for a stack-based virtual machine and is designed to be a portable compilation target for other higher-level languages such as C, C++, Rust, C#, Go, Python, and many more. The text format (.wat) is a human-readable format designed to help developers view the source of a WebAssembly module. The text format can also be used for writing codes that can be compiled into binary format.

Direct writing of Wasm code

It is possible to write WebAssembly manually.

(module

(func $print (param $numl i32) (result i32)

get_local $num1

)

(export "print" (func $print)

)

The following code was written using WebAssembly. There is a function that gets one number (32bit integer) parameter and returns it. Then it exports the print function as print to the host environment.

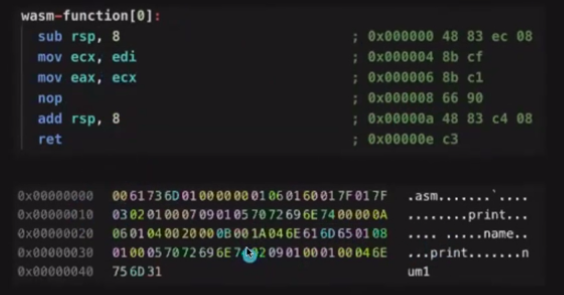

The compiled WebAssembly code would look like this:

The first part is the assembly code targeting x86 machines and the second one is the binary output of the code.

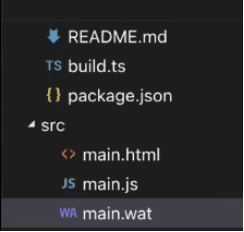

Let's dig a little deeper into another example of Wasm. Imagine we have a project with a structure like this:

As you may know, this is a basic front-end project --- except for that wat file which I shall describe soon.

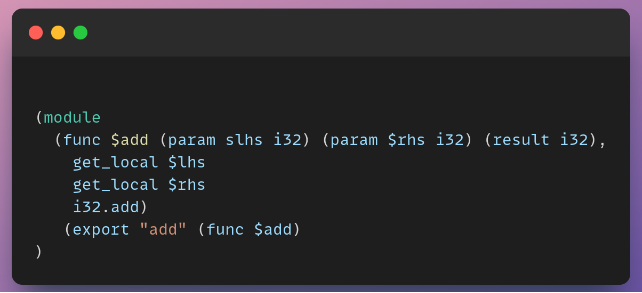

The following code snippet is the main.wat file:

This WebAssembly snippet gets two parameters, adds them together, and returns the result.

Wasm is a stack machine with a FILO (first in last out) data structure. In the example above, the stack contains exactly one i32 value --- the result of the expression ($lhs + $rhs) --- which is handled by i32.add. The return value of a function is just the final value left on the stack.

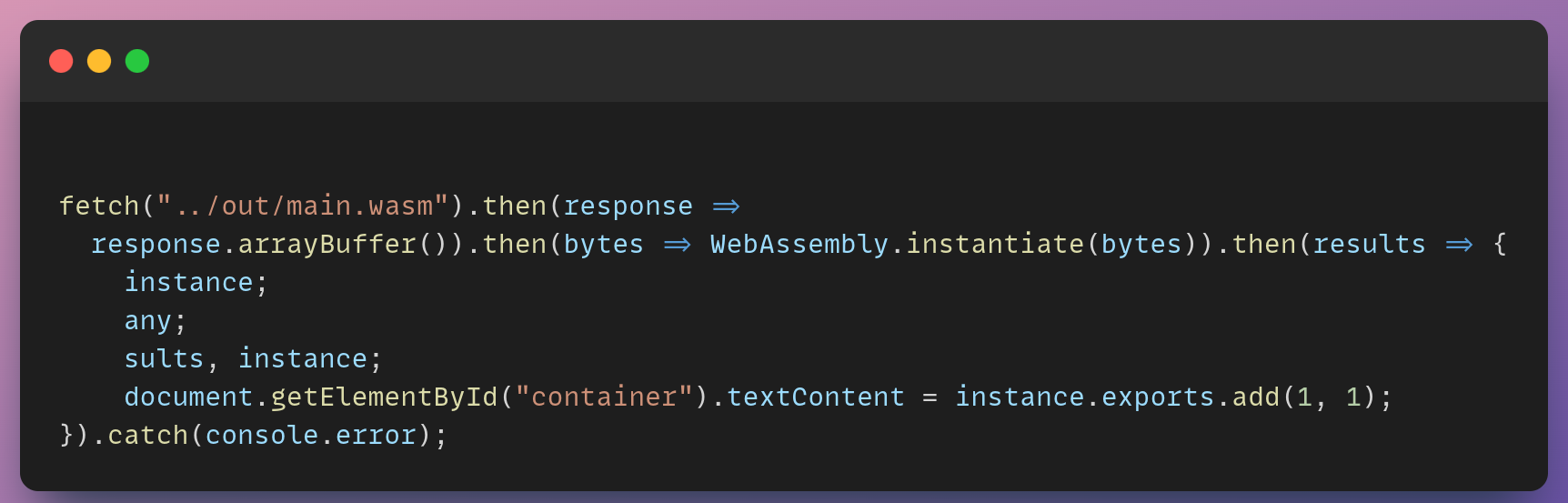

And this is how we can import and use the Wasm code inside our main.js file:

This example is using the non-streaming method of Wasm. This method doesn't directly access the byte code, so it requires an extra step to turn the response into an ArrayBuffer before compiling/instantiating the Wasm module.

Finally, we are putting the value of add(1,1) to the DOM element with the container id attribute.

WebAssembly Opcodes

Opcodes are machine language instructions specified for the operation to be performed. One of the reasons why Wasm is so compact is because the whole operation machine instructions that it supports fit on one page. Take a look at this page to see a list of Wasm opcodes.

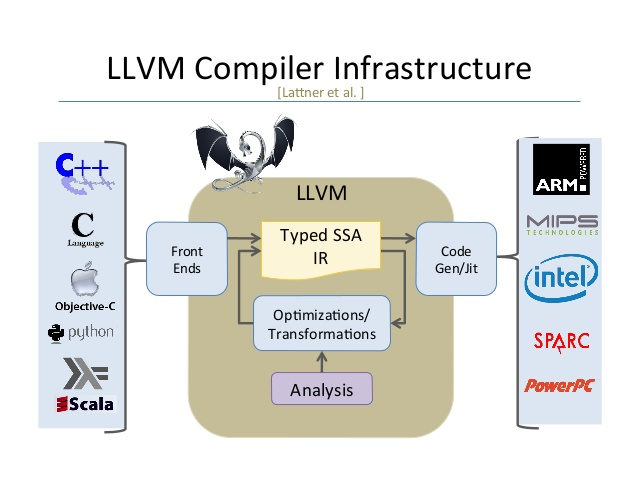

LLVM

In this section, we are going to talk about how this platform's independent complications would be possible.

LLVM is a set of compiler and toolchain technologies, which can be used to develop a front end for any programming language and a back end for any instruction set architecture.

LLVM currently supports compiling of Ada, C, C++, D, Delphi, Fortran, Haskell, Julia, Objective-C, Rust, and Swift.

LLVM Compiler design

Front end

The front end analyzes the source code to build an internal representation of the program, called the intermediate representation(IR).

An IR is a data structure or code used internally by a compiler or virtual machine to represent source code. It is designed to be conducive to further processing, such as optimization and translation. The front end also manages the symbol table.

A symbol table is a data structure used by a language translator. The entries of a symbol table store the information related to the entry's corresponding identifier (or symbols), constants, procedures, and functions.

Middle end

The middle end, also known as the optimizer, performs optimizations on the intermediate representation in order to improve the performance and the quality of the produced machine code.

The main phases of the middle end include the following:

-

Analysis --- Program information is gathered from the intermediate language representation derived from the front end. Data-flow analysis, dependency analysis, alias analysis, pointer analysis, and escape analysis are some of its tasks.

-

Optimization --- The intermediate language representation is transformed into functionally equivalent but faster (or smaller) forms. Popular optimizations are inline expansion, dead-code elimination, constant propagation, loop transformation and even automatic parallelization.

Back end

The back end is responsible for the CPU architecture-specific optimizations and for code generation to run in the CPU.

The main phases of the back end include the following:

-

Machine-dependent optimizations: optimizations that depend on the etails of the CPU architecture that the compiler targets.

-

Code generation: the transformed intermediate language is translated into the output language, usually the native machine language of the system.

Emscripten

Emscripten is a complete Open Source compiler toolchain for WebAssembly.

Using Emscripten you can:

-

Compile C and C++ code, or any other language that uses LLVM, into WebAssembly, and run it on the Web, Node.js, or other Wasm runtimes.

-

Compile the C/C++ runtimes of other languages into WebAssembly, and then run code in those other languages in an indirect way

Practically any portable C or C++ codebase can be compiled into WebAssembly using Emscripten, ranging from high-performance games that need to render graphics, play sounds, and load and process files through to application frameworks like Qt. Emscripten has already been used to convert a very long list of real-world codebases to WebAssembly, including commercial codebases like the Unreal Engine 4 and the Unity engine.

To see tools that use Emscripten under the hood, we can look at Pyodide, which is a Python distribution tool for the browser and Node.js based on WebAssembly And there is also sql.js which we shall discuss in more depth.

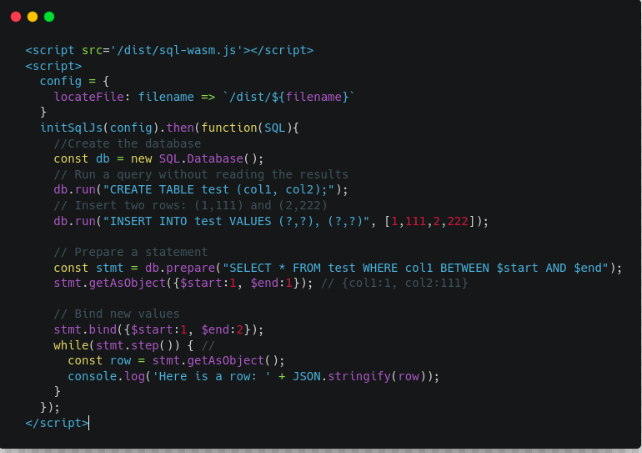

sql.js

sql.js is a javascript SQL database. It allows you to create a relational database and query it entirely in the browser. It uses a virtual database file stored in memory and thus doesn't persist the changes made to the database. However, it allows you to import any existing SQLite file and export the created database as a JavaScript-typed array.

How to use sql.js

As you can see in the code snapshot, we simply injected the sql-wasm.js bundle file into the HTML file. Since we have global access to the initSqlJs method, all it takes is a config object to get access to the SQL object inside its scope. Then, we can simply create a new instance with it and run any SQL query there.

You can find more examples below:

https://sql.js.org/examples/GUI/

https://react-sqljs-demo.ophir.dev/

WebAssembly System Interface (WASI)

Now it's time to know how we can run Wasm outside of the browser. It can be run in a server, blockchain, or any other different architecture. For now, we are going to see how we can run it on a server.

WASI is a system interface for the WebAssembly language. It allows you to run WebAssembly outside of your browser.

It is a collection of standardized APIs to communicate with the operating system

WASI stands for WebAssembly System Interface. It's an API designed by the Wasmtime project that provides access to several operating-system-like features, including files and filesystems, Berkeley sockets, clocks, and random numbers that we'll be proposing for standardization.

It's designed to be independent of browsers, so it doesn't depend on Web APIs or JS, and isn't limited by the need to be compatible with JS. And it has integrated capability-based security, so it extends WebAssembly's characteristic sandboxing to include I/O.

See the WASI overview for more detailed background information, and the WASI tutorial for a walkthrough showing how various pieces fit together.

Note that everything here is a prototype, and while a lot of stuff work, there are numerous missing features and some rough edges. For example, networking support is incomplete.

There are some runtimes that implement this standard API:

Why WASI

1. Portable: You can compile it once and then you can run it on different CPU architectures.

2. Secure: WASI uses a lightweight sandbox, so it has limited access to system resources.

3. Interoperable: For instance, a code written in RustLang can talk to a code written in GoLang or C++.

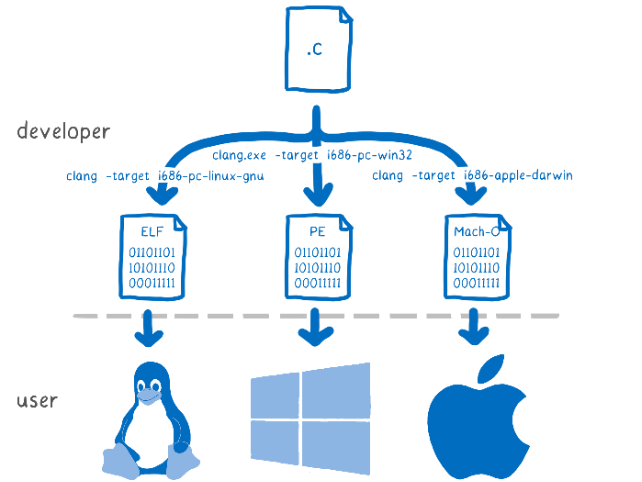

Portability

Clang tool can specify the target CPU and the source code will be targeted to that specific CPU architecture.

On the other hand, with WebAssembly, we can compile once and run across a whole bunch of different machines. The binaries are portable.

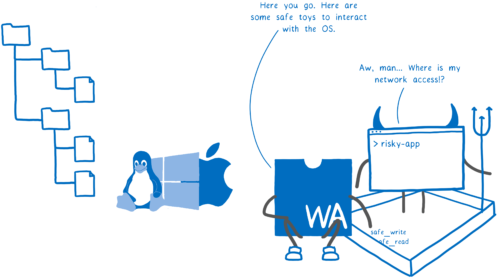

Security

WebAssembly's way of doing security is different. WebAssembly is sandboxed.

This means that the code can't talk directly to the OS. But then how does it do anything with system resources? The host (which might be a browser, or might be a Wasm runtime) puts functions in the sandbox that the code can use.

This means that the host can limit what a program can do on a program-by-program basis. It doesn't just let the program act on behalf of the user, calling any system call with the user's full permissions.

Just having a mechanism for sandboxing doesn't make a system secure in and of itself --- the host can still put all of the capabilities into the sandbox, in which case we're no better off --- but it at least gives hosts the option of creating a more secure system.

Let's see an example that uses Wasm.

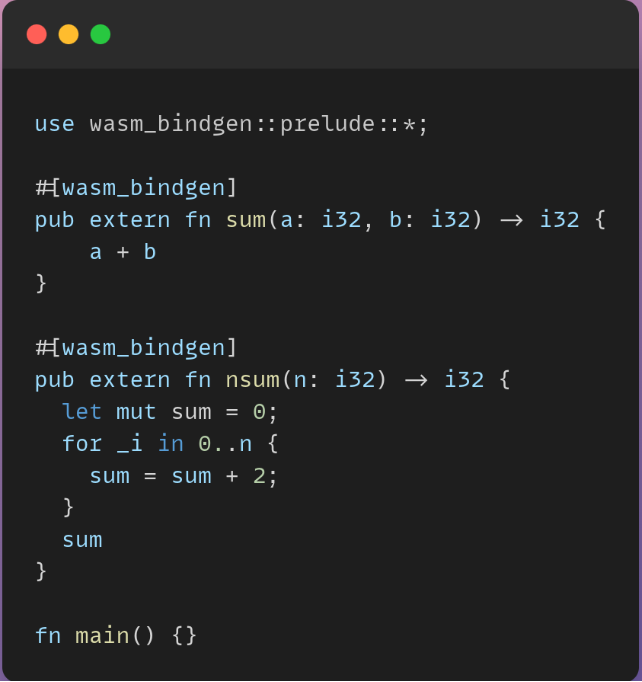

Imagine that we have this function written using Rust that gets two number values, adds them, and returns the result.

We can try to run this code in a Python program. What we need is to install a wasm-pack package on our machine. Then we can simply run this command on the above file:

wasm-pack build --target bundler

It generates a textual representation of the Rust code in Wasm --- in our case, this is located in /pkg/webassembly_bg.wasm --- which we can include in our Python code as below:

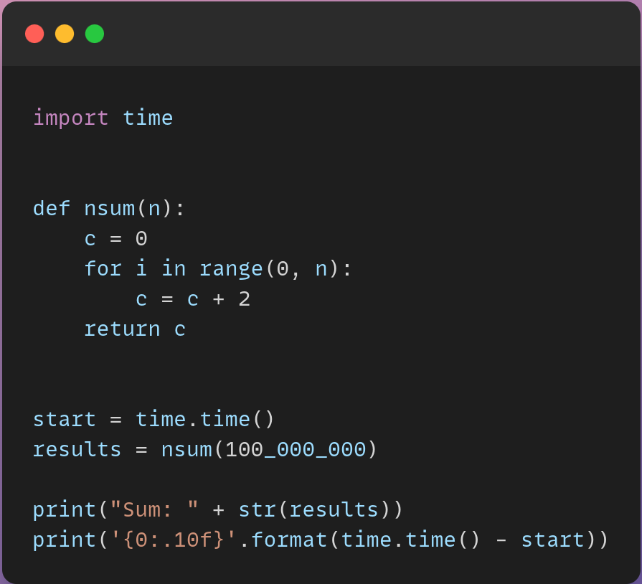

Running a program with and without Wasm

This function will be called a hundred million times. It just adds 2 to each number from 0 to 100,000,000 and sums it up.

In this case on my machine, it took nearly 5 seconds to run this function.

On the other hand, we have this Python snippet which uses the Wasm binary representation of Rust, doing the same thing.

The difference is huge in term of speed. The result was given to me in less than 4 milliseconds! Which is awesome.

Current state of Wasm

Right now lots of big projects are using WebAssembly under the hood. Some good examples are Figma, Google Earth, Shopify, and Photoshop.

See the full list of major projects using WebAssembly.

An amazing example of using Wasm on the web is porting AutoCad's legacy 35 years old code to run flawlessly on the browser.

If you like computer games, check Doom3. The whole game has been ported to run in the browser, and it renders perfectly!